An international “grand committee” of lawmakers called on Thursday for a pause on online micro-targeted political advertisements with false or misleading information until the area is regulated.

The committee, formed to investigate disinformation, gathered in Dublin to hear evidence from Facebook Inc, Twitter Inc and Alphabet Inc’s Google and other experts about online harms, hate speech and electoral interference. The meeting was attended by lawmakers from Australia, Finland, Estonia, Georgia, Singapore, the UK and the United States.

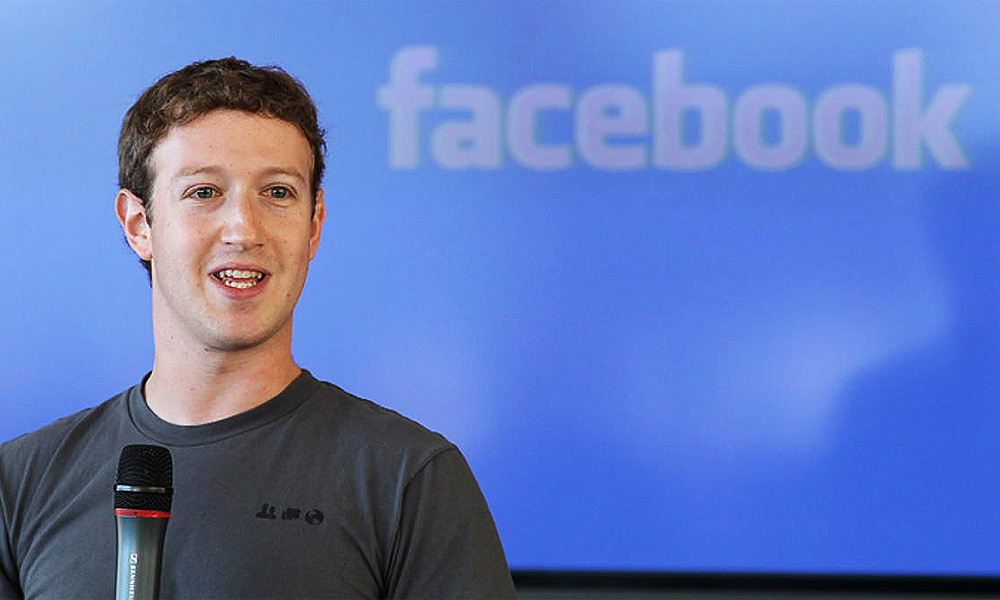

The committee’s inaugural session in London last November featured an empty chair for Facebook chief executive Mark Zuckerberg after he declined to be questioned.

Facebook has been under scrutiny in recent weeks over its decision to not fact-check ads run by politicians, which intensified when rival Twitter announced last month that it would ban all political ads.

Zuckerberg (below) has defended this policy, saying that the company does not want to stifle political speech.

Politicians can micro-target groups of voters on social media based on user data such as location, age and interests, a practice critics fear could intensify the effects of false or misleading information on certain groups and suppress voter turnout.

At a conference in Lisbon on Thursday, Europe’s antitrust chief Margrethe Vestager said, “If it’s only in your feed, between you and Facebook, and their micro-targeting of who you are, that’s not democracy anymore.”

Doctored video

Facebook said on Thursday a doctored video shared by Britain’s governing Conservatives would not have broken its rules on political advertising if it had run as a paid-for ad.

“Ads from political parties and political candidates are not subject to our fact-checking rules,” Rebecca Stimson, Facebook’s head of UK Public Policy, told reporters on a call to explain the company’s policies ahead of Britain’s Dec 12 election.

“What that has meant is what the Conservative party put in that advert has been the subject of ferocious public debate and discussion, precisely because people could see that it was there,” Stimson said.

Facebook partners with global third-party fact-checking organisations to curb misinformation on the site.

Ahead of an election that could shape the fate of Brexit, some politicians have expressed concerns that misleading information could spread swiftly across social media.

British Prime Minister Boris Johnson’s party chairperson was forced to defend the distribution of a doctored video clip of a rival Labour Party politician on Wednesday, overshadowing the launch of the party’s election campaign.

Johnson’s Conservatives posted the heavily edited video clip of Labour’s Brexit spokesman Keir Starmer on Facebook and Twitter, editing out a key response in an interview to give the impression that the party had no answer for Brexit.

The video was shared as a normal post on the Conservatives’ Facebook page, but has not been used as a paid-for ad on the platform, according to a search of Facebook’s Ad Library, a database launched to increase political ad transparency.

- Reuters