AI has slipped quietly into everyday Malaysian life.

It drafts office emails before meetings, suggests songs during the drive home, and enhances photos before they are shared on Instagram.

It even summarises long documents for students racing against assignment deadlines. When prompted, it recommends what to watch next.

Sometimes, it even sounds like it understands us.

Behind that smooth experience lies a more complex reality, one most users rarely pause to consider.

According to Benjamin Shepherdson, Head of Privacy at CelcomDigi, Malaysians are embracing AI-powered tools with growing enthusiasm.

The concern, he said, is not how quickly people are adopting these technologies, but how deeply they understand what happens to the information they share.

“The issue is not speed of adoption,” he stressed. “It is whether users understand how these tools work and what happens to their data.”

The invisible trail behind everyday use

To most users, AI features are straightforward to use. It could be a filter on TikTok, a chatbot that helps draft a reply, or a voice assistant setting reminders.

These encounters may seem routine and insignificant, but Shepherdson explained that they are not.

A voice note recorded for convenience, a selfie uploaded for fun, a typed prompt asking for help with a report - each interaction becomes data.

Modern AI systems are trained on vast datasets and often continue learning from user behaviour. Depending on the platform’s policies, those inputs may be processed, stored, analysed or used to improve the system.

TikTok, for example, may feel like pure entertainment. Yet its recommendation engine learns continuously from what users watch, how long they linger, what they like and what they skip. The result is a highly personalised feed shaped by accumulated signals.

Productivity tools operate in similar ways. Digital assistants often require access to calendars, contacts and voice inputs to function effectively. The benefit is efficiency. The trade-off is access to a deeply personal context.

What concerns Shepherdson is that many Malaysians do not realise how layered this data collection can be.

AI platforms may retain conversation histories, use anonymised inputs to refine models, or log interactions for security and performance purposes.

Retention practices differ between providers. For users, that variation matters, particularly when sharing sensitive or confidential information.

When technology feels personal

Part of AI’s appeal lies in its design. Many tools are built to feel conversational and responsive.

For younger users, especially, interacting with AI can feel no different from messaging a friend or asking a colleague for help. However, that familiarity can blur boundaries.

“These interactions are driven by data, not empathy,” Shepherdson cautioned. The sense of responsiveness can make it easy to forget that every exchange feeds a system designed to process and learn from inputs.

He also noted that some applications collect more data than is strictly necessary for their core function. Users often agree to these permissions because the service works better, loads faster or unlocks additional features.

Over time, this behaviour becomes normal. Extensive data access begins to feel like the standard cost of participation in digital life.

When trust is exploited

The consequences of misplaced trust are no longer theoretical.

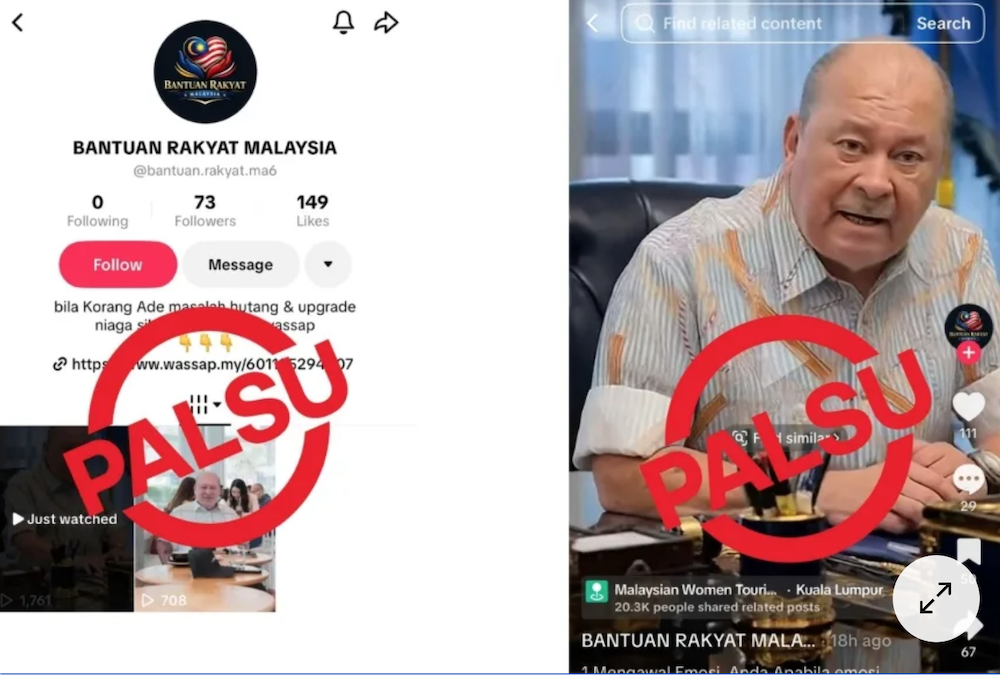

AI-generated impersonation scams have become commonplace, where cloned voices and fabricated videos are used to deceive victims.

It could take the form of a familiar voice asking for urgent financial help or a convincing video message that appears authentic.

These technologies can mimic real individuals with alarming accuracy.

From Shepherdson’s perspective, awareness is the first line of defence. Users should be cautious about sharing voice recordings or facial data publicly and verify unexpected requests through trusted channels, especially when money or sensitive information is involved.

Tools such as multi-factor authentication add another layer of protection, but technological safeguards alone are not enough if users are unaware of the risks.

The responsibility to build trust

Shepherdson emphasised that organisations must also play their part.

At CelcomDigi, he said efforts go beyond regulatory compliance. The telco company focuses on strengthening privacy safeguards, promoting digital literacy so users better understand how their data is used, and working closely with regulators to support responsible technology practices.

Trust, in his view, cannot be assumed simply because a service is popular or convenient. It must be earned through transparency, accountability and meaningful user control.

That includes seeking explicit consent before using deeply personal identifiers, such as faces, voices or chat histories to train AI systems. Consent, he stressed, should be clear and revocable, not hidden within lengthy jargon-filled terms and conditions.

He also advocates privacy-by-design, ensuring that data minimisation and secure storage are built into systems from the outset rather than treated as secondary considerations.

Living with AI - wisely

AI is now part of daily Malaysian routines - at work, at school, even at home.

It offers real advantages: speed, convenience, creativity and access to information. However, the long-term health of the digital ecosystem depends on whether users understand the trade-offs involved.

The question is no longer whether Malaysians will live with AI. They already do.

The question is whether they will use it with enough awareness to protect their personal information while enjoying its benefits.

This S.A.F.E. Internet Series is in collaboration with CelcomDigi.

The views expressed here are those of the author/contributor and do not necessarily represent the views of Malaysiakini.